Most enterprise AI programs don’t fail because they chose the wrong model. They fail because nobody solved the harder problem first.

Your organization already possesses the answers to most of its critical business questions. They exist in contract negotiations finalized two years ago, in customer service transcripts from last quarter, in the Slack thread where your best engineer explained exactly why a product decision went wrong. The knowledge is there. The intelligence is not, because the two have never been properly connected.

This is the defining challenge of enterprise AI in 2026.

The Real Reason AI Pilots Don’t Scale

IDC research puts a stark number on the gap: only 1% of organizations have reached optimized AI maturity. The other 99% are running pilots that impress in demos and stall in production.

The conventional explanation blames data quality, change management, or unclear use cases. These are real, but they’re symptoms. The root cause is more fundamental: enterprise AI systems are being evaluated on their intelligence, while the integration layer, the infrastructure that determines what the AI can actually see, access, and act on, is treated as an afterthought.

Consider what sits outside the reach of most enterprise AI deployments today: the customer contracts living in SharePoint, the pipeline data locked in Salesforce, the financial models in SAP, the project history in Jira, the institutional knowledge exchanged daily in Slack and email. Each system has its own authentication, its own API logic, its own access controls. Connecting them isn’t a configuration task. It’s an architectural undertaking.

Organizations that treat enterprise AI as a technology purchase rather than a services engagement consistently arrive at the same destination: a sophisticated model with nowhere meaningful to look.

From Retrieval to Action: The Agentic Difference

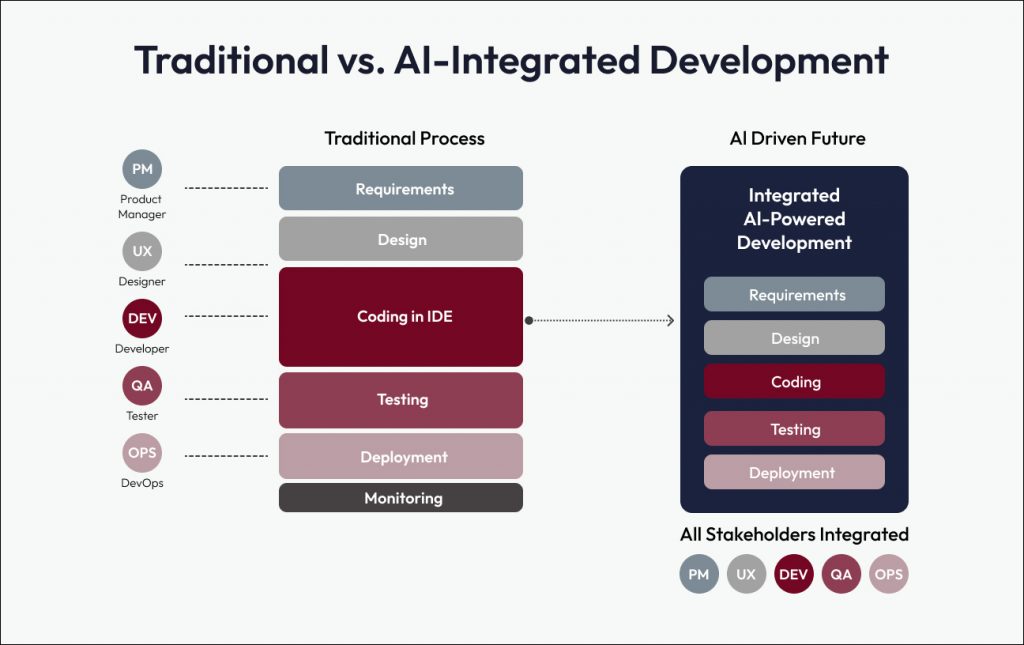

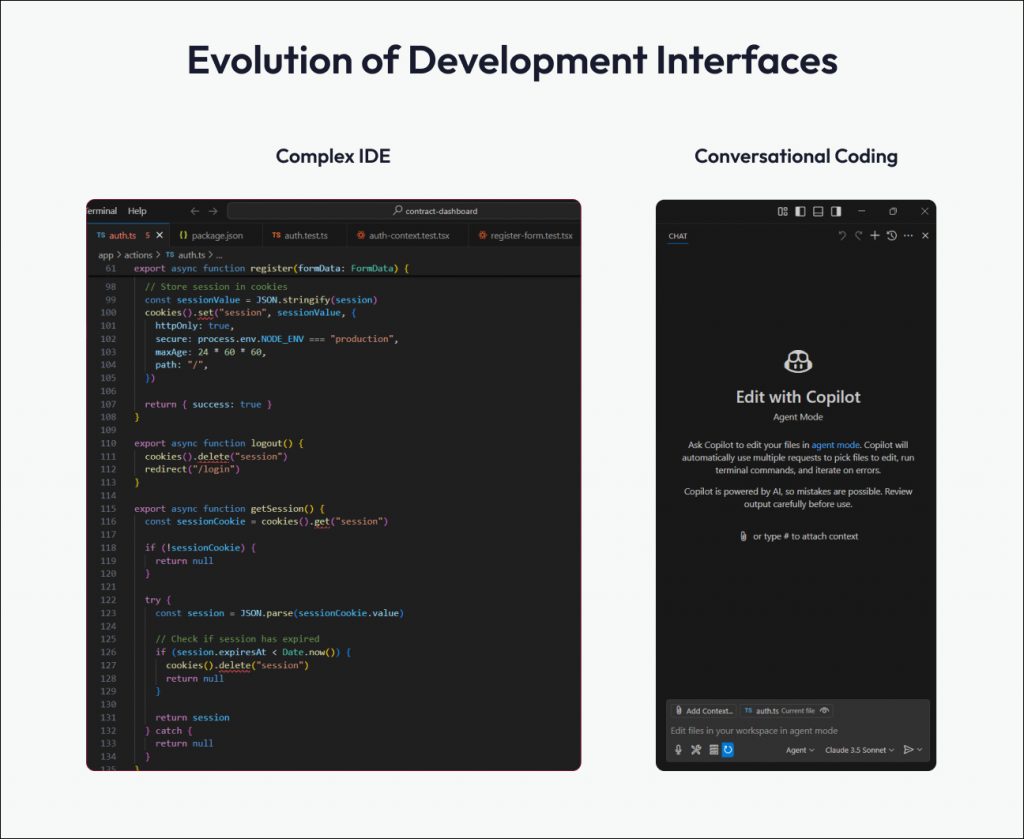

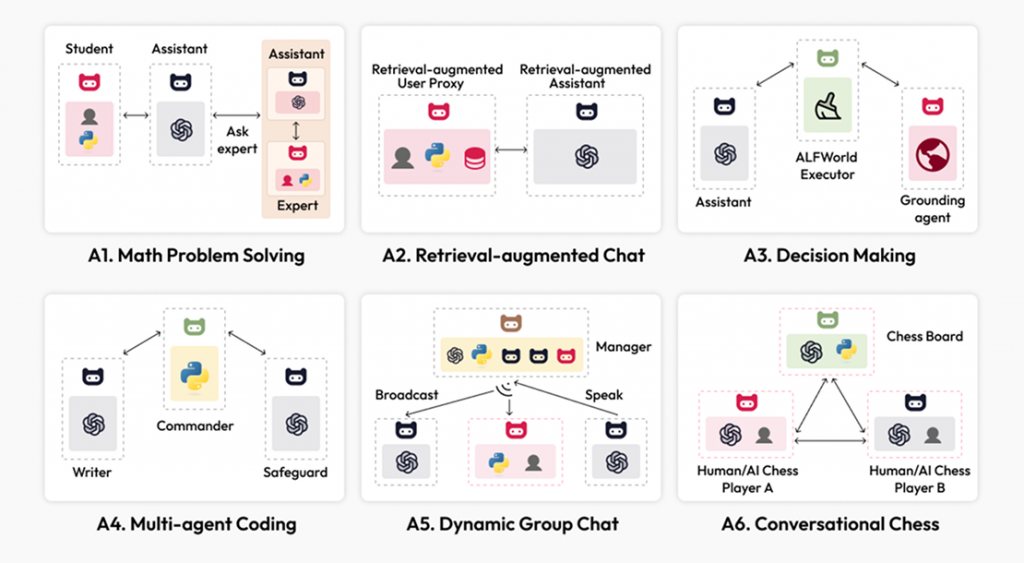

The first generation of enterprise chatbots was built around retrieval. Ask a question, get an answer, maybe get a link.

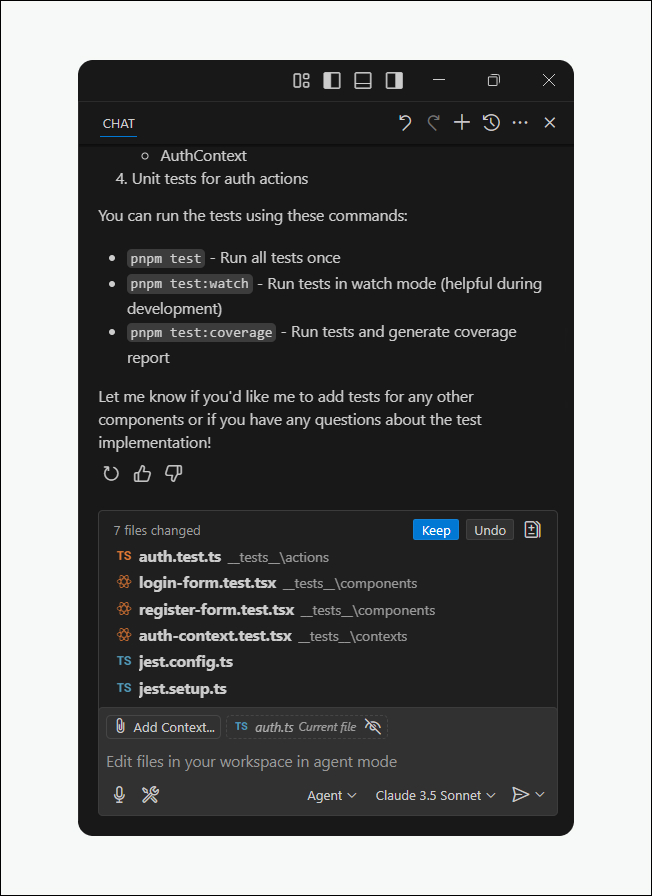

Agentic AI operates on a different principle entirely. It doesn’t wait to be asked, it reasons, plans, and acts.

Here’s what that distinction looks like in practice. A project manager asks about the status of a deal. A retrieval-based system provides the last status update. An agentic system does something categorically different: it identifies that the project margin has dropped below threshold, checks the relevant executive’s availability in Outlook, schedules a briefing, and pre-generates a report showing margin trends and contributing factors, before a human has processed the initial answer.

The problem isn’t just flagged. The response is already in motion.

This is the shift that separates enterprise AI as a productivity feature from enterprise AI as a strategic capability. And it depends entirely on the quality of the integration layer beneath it.

Why This Is an Integration Problem, Not an LLM Problem

Choosing a large language model is the easiest decision in an enterprise AI deployment. The hard decisions come before and after: What data can the system access? Under what permissions? How does it authenticate across systems? How does it handle a workflow that spans four platforms and three departments? What happens when a step fails mid-execution?

These are not model questions. They are architecture questions, and they require a fundamentally different set of competencies to answer well.

A production-ready enterprise conversational AI system must:

- Connect securely to heterogeneous platforms with different APIs and authentication frameworks

- Understand business logic deeply enough to orchestrate coherent multi-step workflows

- Enforce role-based access controls that reflect real organizational boundaries, finance accessing financial projections, HR accessing employee records, neither accessing the other’s domain

- Maintain comprehensive audit trails that satisfy compliance and governance requirements

- Degrade gracefully when a system is unavailable or a workflow hits an unexpected state

Off-the-shelf tools don’t navigate this complexity. The organizations consistently achieving measurable returns from enterprise AI share a common trait: they invested in deep integration architecture before they optimized for AI performance.

What This Looks Like in Production

Turning 250GB of Stranded Data into a 30-Second Answer

A leading New York-based renewable energy company had a data problem that will sound familiar: large volumes of unstructured documents, siloed across multiple systems and formats, producing slow, manual, and unreliable reporting. The intelligence existed. The access didn’t.

We built a conversational analytics platform that changed the fundamental question from “who do I ask?” to “what do I want to know?” The system processes 250GB of unstructured data, makes over 3,000 documents and reports searchable through natural voice or text, and achieves 85–90% accuracy on proprietary data. Information retrieval that previously required 1–2 hours of manual effort now takes under 30 seconds.

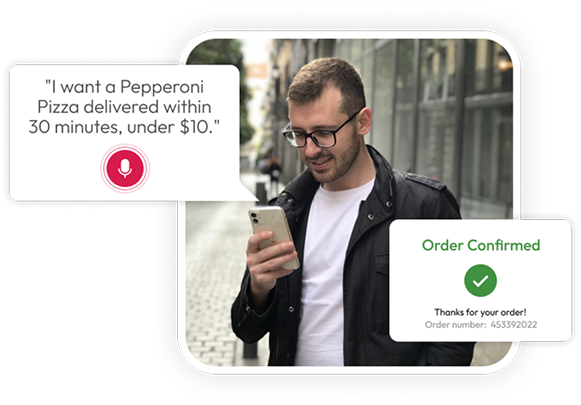

Decision-makers can now query complex datasets in plain language, “What’s our energy production trend this quarter?” and receive accurate, sourced answers without waiting for a reporting cycle. The bottleneck between data and decision has been effectively removed.

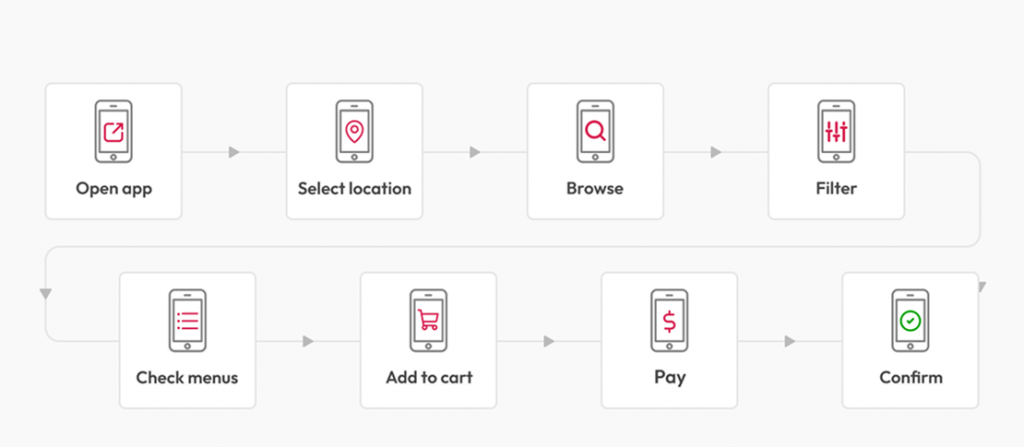

Resolving Customer Friction at Scale

A major U.S. credit provider was absorbing a growing volume of customer complaints tied to basic account services. Customers who couldn’t or wouldn’t use the mobile app were defaulting to live agent calls for transactions that should have been self-service. Agents were overwhelmed. The experience was deteriorating.

We deployed an Intelligent Virtual Assistant using Amazon Lex and Connect that lets customers access their accounts through natural conversation, by phone or chat, in English or Spanish. The system handles authentication, balance inquiries, payments, and account updates without agent involvement. Agent workload dropped significantly as routine queries shifted to self-service, and the architecture is built to scale conversational AI across both voice and chat channels as the business grows.

Rebuilding HR Operations Around Intelligence

A services organization replaced its fragmented HR workflows with a coordinated system of five specialized AI agents, each purpose-built for a distinct function within talent operations.

The results were structural, not incremental. HR query response time dropped from hours to seconds. Candidate matching shifted from keyword filtering to vector-based skills and experience analysis, surfacing better-fit candidates earlier in the pipeline. Automated agents now handle job description generation, resume standardization, and candidate gap analysis, work that previously consumed recruiter time that could have been spent on relationship-building and strategic hiring decisions.

The team didn’t just get faster. They got to do different, higher-value work.

The Governance Imperative

Enterprise conversational AI systems access sensitive data and execute actions on behalf of employees. The governance architecture is not optional; it is the system.

Role-based access control must be enforced at the integration layer, not the application layer. This means connecting to identity and access management systems, defining and enforcing permission policies that reflect real organizational structure, and maintaining audit trails detailed enough to answer the question “who accessed what, when, and why” under regulatory scrutiny.

Permissions must also be dynamically updated as roles change, as organizational structures evolve, and as new data sources come online. Static governance frameworks become liabilities in organizations that move fast. The architecture must move with them.

The Path from Pilot to Production

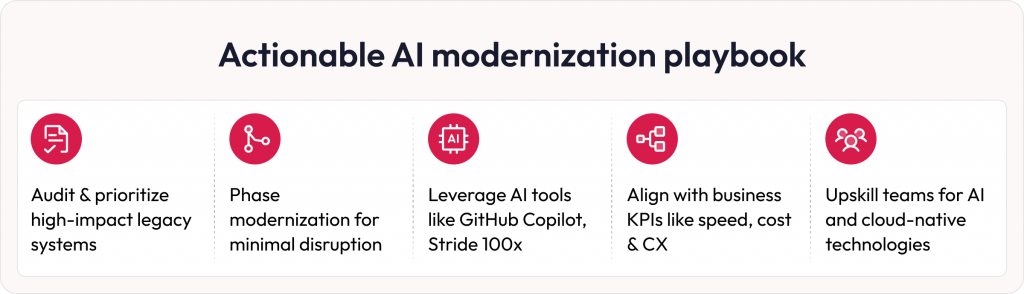

The organizations that successfully scale enterprise AI share a consistent pattern. They start with a specific, measurable business problem.

“Reduce customer service resolution time by 40%” is a strategy. “Implement conversational AI” is a purchase.

From there, the progression looks like this:

Assess before you build

Can your critical systems be accessed programmatically? Is your data governance consistent enough to support AI workflows? Are your authentication frameworks ready for agent-level access? These questions determine your actual starting point, which is often different from where leadership assumes the organization is.

Win where the integration is tractable

Internal knowledge access and employee-facing workflows are higher-value, lower-complexity starting points than customer-facing or regulated use cases. Prove the model internally before exposing it externally.

Measure what matters

Task completion rates, time saved per workflow, reduction in manual escalations, direct impact on business outcomes. AI accuracy is a proxy metric. Business impact is the real one.

Build for continuous improvement

The organizations extracting the most value from conversational AI have stopped thinking of it as a project. They have cross-functional teams iterating on the system continuously, based on user behavior, new data sources, and evolving business priorities.

The Window Is Open, But Not Indefinitely

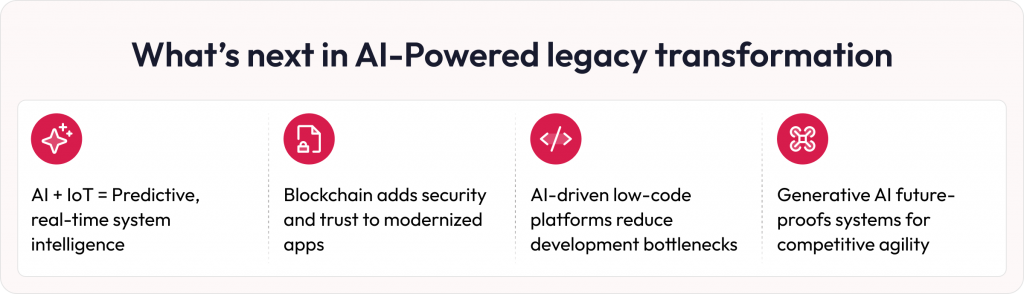

Early adopters of agentic enterprise AI are accumulating a structural advantage: faster decisions, lower operational overhead, and compounding returns as their systems learn and expand. Organizations still operating basic retrieval chatbots are not standing still; they are falling behind relative to competitors who have solved the integration problem.

The question is no longer whether conversational AI belongs in the enterprise. It does. The question is whether your organization will treat it as a technology feature or an architectural capability, and whether you have the right partner to build it properly from the ground up.

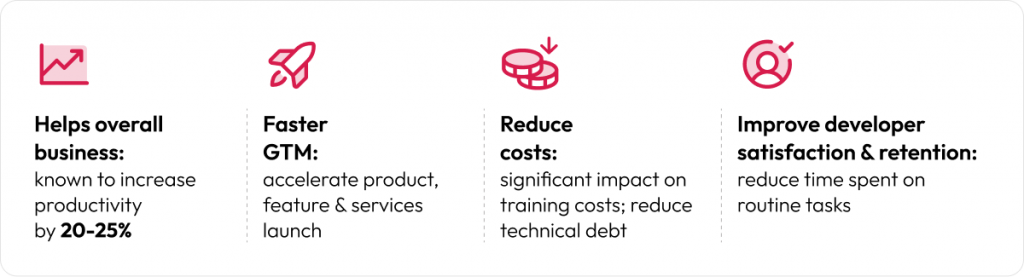

Robosoft Technologies designs, builds, and deploys production-grade conversational AI systems for enterprises that are ready to move beyond pilots. Our work sits at the intersection of deep integration architecture, AI orchestration, and enterprise governance because that’s where the real returns are.

Your data already holds the answers. Let’s build the AI systems to unlock them. Connect with our Data & AI team.